Hi everyone, today we are going to do a short tutorial on unsharp masking with Python and OpenCV.

Unsharp masking, despite what the name may suggest, is a processing technique used to sharpen images, that is to make to make edges and interfaces in your image look crisper.

Reading this post you’ll learn how to implement unsharp masking with OpenCV, how to tune its strength and, as a bonus, how to turn a sharpening effect into blurring and vice versa. But let’s not get ahead of ourselves. Here’s what we are going to do, step by step

Unsharp masking works in two steps:

Or, in pseudocode:

sharp_image = image - a * Laplacian(image)image is our original image and a is a number smaller than 1, for instance 0.2.

Let’s see this with some actual Python code.

import cv2

import matplotlib.pyplot as plt

from scipy.ndimage.filters import median_filter

import numpy as np

original_image = plt.imread('leuven.jpg').astype('uint16')

# Convert to grayscale

gray_image = cv2.cvtColor(original_image, cv2.COLOR_BGR2GRAY)

# Median filtering

gray_image_mf = median_filter(gray_image, 1)

# Calculate the Laplacian

lap = cv2.Laplacian(gray_image_mf,cv2.CV_64F)

# Calculate the sharpened image

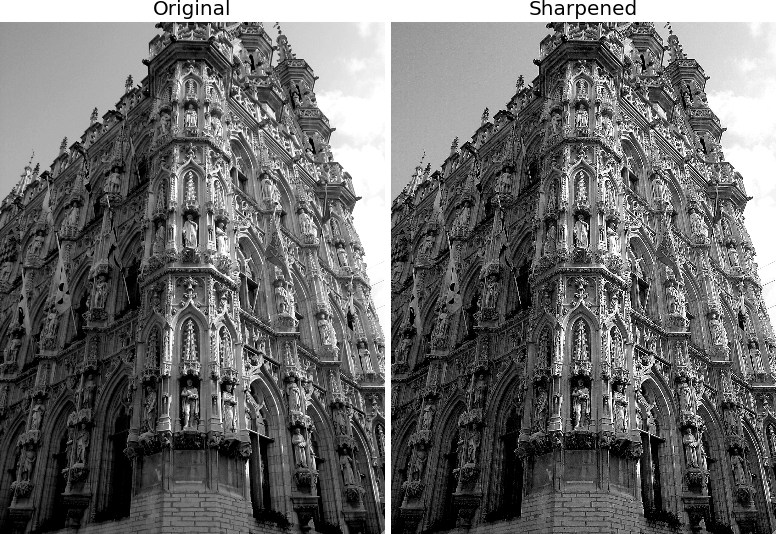

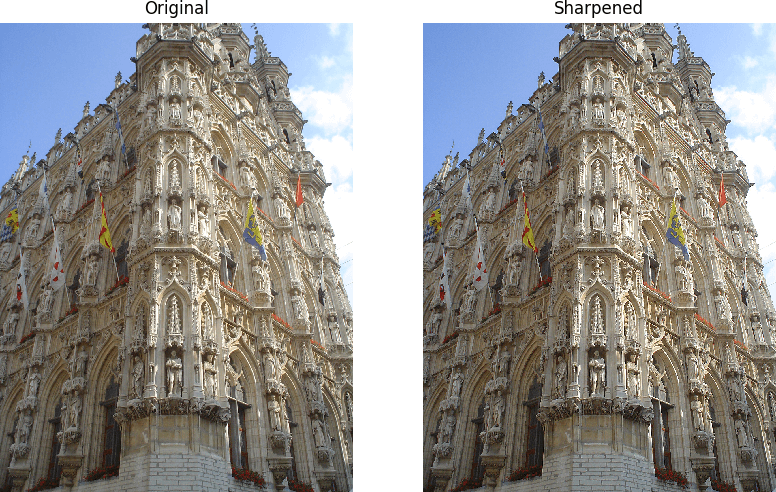

sharp = gray_image - 0.7*lapAnd here’s the result

A word of wisdom about the code, before moving on.

You might have noticed that we run a median filtering on the image, before calculating the Laplacian. The fact is that the Laplacian tends to amplify the noise in the image. So, to get a better result, we apply a mild edge-preserving filter, such as a median filter, which reduces the noise while, at the same, time, preserving the sharpness of the edges.

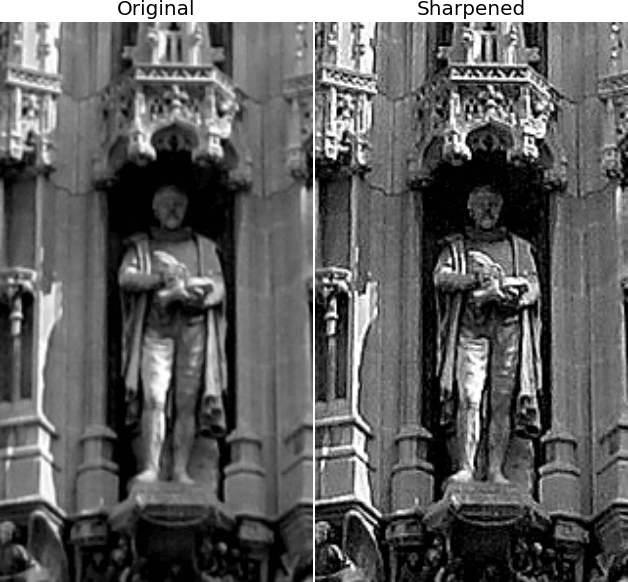

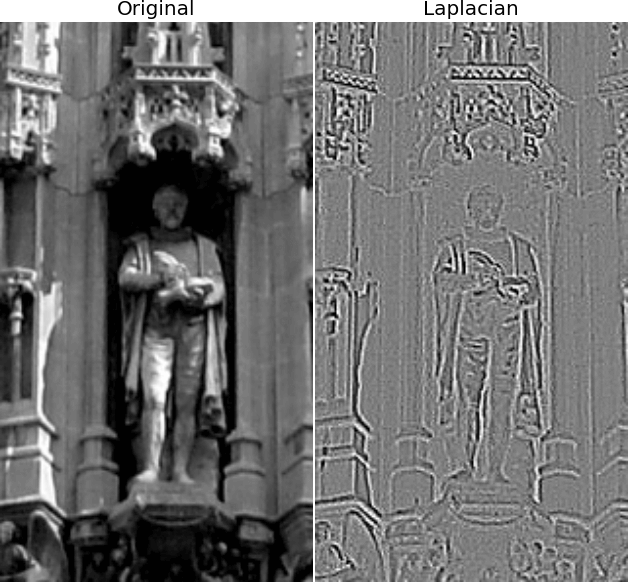

Calculating the Laplacian of an image is essentially an edge detector. Smooth regions of an image have very small Laplacian. Edges and interfaces have a large (positive or negative) Laplacian.

Look at the image below: the Laplacian of our image is zero almost everywhere (the bits in grey), and it is very positive or very negative around the edges.

It’s this black-white (or white-black) fringe that, once inverted and subtracted from the image makes a crisper version of the original image.

The effect of taking away the Laplacian is going to have an effect only around the edges (because the Laplacian is basically zero everywhere else). Hence the edges will appear sharper.

That takes us back to the original question: where the heck is the ‘unsharp’ mask? Well, the idea is that if taking away the Laplacian is going to sharpen an image, we would logically expect that adding the Laplacian to an image would create a smoother version of that image. In other words, the Laplacian of an image is approximated by the difference between the original image and a smoothed (unsharp) version of that same image. This is where the name of this image processing algorithm comes from.

We can write this insight in pseudo-code:

sharp_image = image - a * (image - unsharp) = (1-a)*image - a*unsharpWell, to work with RGB images (ahem, with usual images) we’ll have to apply what we have learned so far to each channel of the image. In addition we need to look out and correct for distortion in the histogram that can introduce artefacts.

OK, first let’s wrap our unsharp mask code into a neat function:

def unsharp(image, sigma, strength):

# Median filtering

image_mf = median_filter(image, sigma)

# Calculate the Laplacian

lap = cv2.Laplacian(image_mf,cv2.CV_64F)

# Calculate the sharpened image

sharp = image-strength*lap

return sharpAs you can see, we introduced the parameters sigma (to control the action of the median filter) and strength (what we called a in the pseudo-code above) that tunes the amount of Laplacian we want to add or take away.

After that we run the function recursively on the three channels of an RGB image.

original_image = plt.imread('leuven.jpg')

sharp1 = np.zeros_like(original_image)

for i in range(3):

sharp1[:,:,i] = unsharp(original_image[:,:,i], 5, 0.8)And here’s what we get.

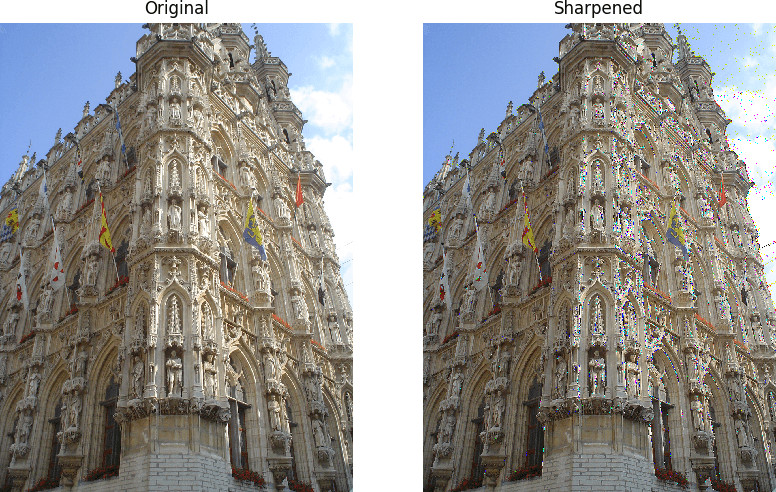

You can immediately see the problem. There is some serious distortion of many pixels, especially around interfaces and edges. These pixels are assigned the wrong colour, compared to their surrounding. This problem was not visible in a grayscale image, as one can always rescale the intensity in a relative way, so that the image will still look visually OK. We can’t do the same thing with an RGB image though, since it is the relative strength of the different channels that gives each pixel its colour. By changing the relative strength as we did, we scramble the colours of some of the pixels!

If we take a closer look, we’ll find that maximum and minimum values of the sharpened image are no longer contained within the 0-255 channels of a normal image. To see that you could try returning sharp.astype("uint16") in the unsharp masking function. To avoid this problem then, we’ll have to simply saturate those pixel in either direction. Let’s modify the function in the following way

def unsharp(image, sigma, strength):

# Median filtering

image_mf = median_filter(image, sigma)

# Calculate the Laplacian

lap = cv2.Laplacian(image_mf,cv2.CV_64F)

# Calculate the sharpened image

sharp = image-strength*lap

# Saturate the pixels in either direction

sharp[sharp>255] = 255

sharp[sharp<0] = 0

return sharpIn this way values larger than 255 are flattened back to 255 and the same for the negative values that are set to zero. And here’s the new result.

The image is obviously sharper and the distortions are gone!

Remember that little parameter strength we introduced in the function definition? As anticipate before we can use it to tune the action of the unsharp masking. Specifically, if we make it negative, that is if we add the Laplacian instead of subtracting it, the sharpening turns into a blurring. Try it for yourself!

That’s all for today, thanks for reading!

| Cookie | Duration | Description |

|---|---|---|

| cookielawinfo-checkbox-analytics | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Analytics". |

| cookielawinfo-checkbox-functional | 11 months | The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-others | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. |

| cookielawinfo-checkbox-performance | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Performance". |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |